Artificial intelligence systems are now responsible for powering search engines, chatbots, recommendation systems, social platforms, enterprise automation tools, and generative AI applications. As these systems become more integrated into business and consumer environments, concerns about harmful AI outputs have intensified. Toxic language, misinformation, hate speech, biased recommendations, unsafe responses, and hallucinated content can damage user trust and expose organizations to regulatory and reputational risks.

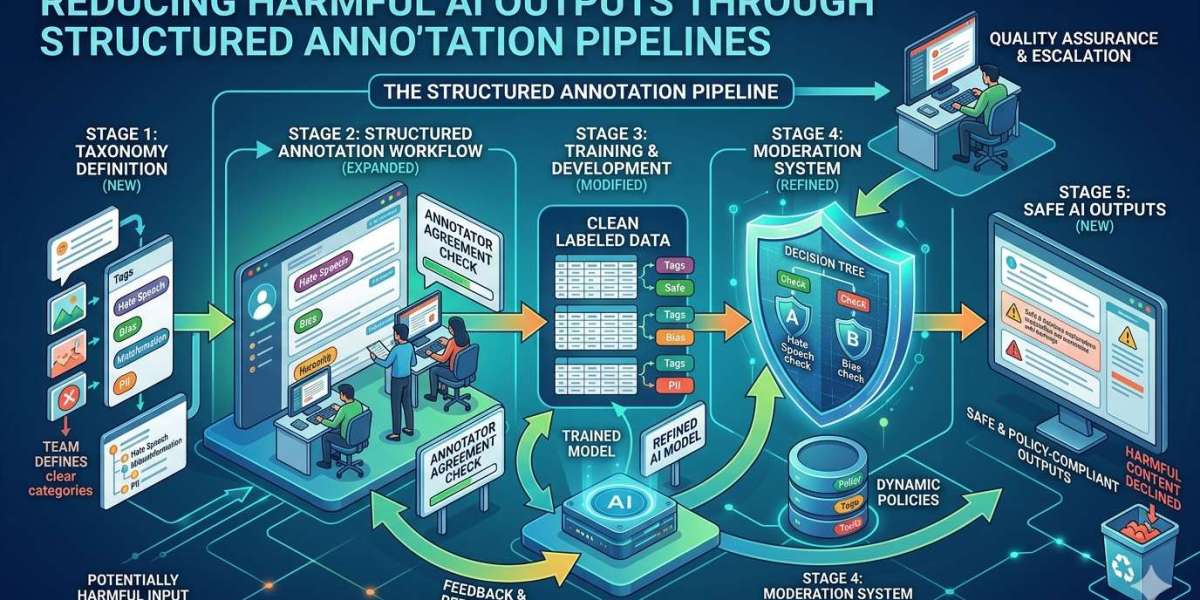

To address these challenges, organizations must move beyond basic model training and adopt structured annotation pipelines that improve the quality, safety, and contextual accuracy of AI systems. At Annotera, we understand that high-performing AI models rely heavily on the quality of annotated training data and the workflows used to manage it. A carefully designed annotation pipeline creates the foundation for safer and more reliable AI behavior.

As a leading data annotation company, Annotera helps organizations build scalable annotation systems that reduce harmful outputs while improving overall model performance.

The Growing Problem of Harmful AI Outputs

AI systems learn from massive datasets collected from websites, social media platforms, forums, customer interactions, and other digital sources. While these datasets provide scale, they also contain biased, offensive, misleading, or harmful information. Without proper filtering and annotation, models can unintentionally reproduce these harmful patterns.

Examples of harmful AI outputs include:

- Toxic or abusive language generation

- Discriminatory or biased responses

- Unsafe healthcare or legal recommendations

- Misinformation and fabricated facts

- Inappropriate content suggestions

- Harmful conversational behavior in chatbots

- Failure to detect dangerous or policy-violating content

Many organizations initially focus on model architecture improvements to solve these problems. However, the underlying issue often lies in poor-quality training data and inconsistent annotation methodologies.

This is where a structured annotation pipeline becomes essential.

What Is a Structured Annotation Pipeline?

A structured annotation pipeline is a systematic process used to collect, classify, review, validate, and continuously improve labeled data for machine learning systems. Instead of relying on ad hoc tagging processes, structured pipelines establish clear workflows, annotation standards, quality checks, escalation procedures, and feedback loops.

A mature annotation pipeline typically includes:

- Data collection and preprocessing

- Content categorization frameworks

- Annotation guidelines and taxonomies

- Multi-layer quality assurance

- Human review and escalation workflows

- Bias detection mechanisms

- Continuous retraining feedback loops

- Performance monitoring and evaluation

Organizations working with a professional text annotation company can build annotation infrastructures that support long-term AI safety and reliability.

Why Annotation Quality Directly Impacts AI Safety

AI models interpret patterns based on the examples they receive during training. If harmful content is mislabeled, inconsistently tagged, or insufficiently represented, the model may fail to recognize dangerous outputs during deployment.

For example:

- Hate speech hidden behind sarcasm may go undetected

- Contextual threats may be classified as harmless

- Cultural nuances may be ignored

- Biased language patterns may be reinforced unintentionally

Structured annotation pipelines improve safety by ensuring that training datasets contain accurate contextual labeling across a wide range of scenarios.

At Annotera, our annotation teams focus heavily on contextual understanding rather than isolated keyword tagging. This approach allows AI systems to better interpret intent, sentiment, severity, and conversational context.

The Role of Human Review in Reducing Harmful Outputs

Fully automated moderation systems often struggle with nuanced language, regional dialects, humor, coded speech, and emerging harmful trends. Human reviewers remain critical for identifying complex safety risks that automated systems may overlook.

Human-in-the-loop workflows strengthen annotation pipelines by introducing expert validation at key stages of model training.

Human reviewers help:

- Interpret ambiguous content

- Detect contextual toxicity

- Identify misinformation patterns

- Recognize evolving harmful language

- Improve labeling consistency

- Escalate edge-case scenarios

A reliable data annotation outsourcing strategy ensures access to experienced linguistic specialists and domain experts who can support large-scale annotation projects without compromising quality.

Building Consistent Annotation Guidelines

One of the biggest challenges in AI safety training is annotation inconsistency. Different annotators may interpret the same content differently if guidelines are vague or incomplete.

Structured annotation pipelines solve this issue by implementing detailed annotation documentation that defines:

- Harm severity levels

- Offensive language categories

- Context interpretation rules

- Escalation criteria

- Cultural sensitivity standards

- Policy violation examples

- Acceptable ambiguity thresholds

Clear annotation protocols improve inter-annotator agreement and create more reliable datasets for model training.

As an experienced text annotation company, Annotera develops customized annotation frameworks tailored to each client’s platform policies, regulatory requirements, and AI objectives.

Multi-Layer Quality Assurance Improves Reliability

Quality assurance is one of the most important components of structured annotation pipelines. Without strong QA systems, annotation errors can propagate into production models and increase harmful AI behavior.

Effective QA processes include:

Peer Reviews

Annotated samples are reviewed by additional annotators to validate consistency and accuracy.

Expert Audits

Senior reviewers evaluate high-risk datasets and complex moderation categories.

Consensus Scoring

Multiple annotators independently label the same content to measure agreement rates.

Real-Time Feedback

Annotators receive continuous coaching and clarification updates to improve performance.

Performance Benchmarking

Teams are evaluated against predefined accuracy thresholds and compliance metrics.

Organizations leveraging text annotation outsourcing services benefit from scalable QA systems that maintain quality across large annotation volumes.

Bias Reduction Through Diverse Annotation Teams

Bias in AI systems often originates from imbalanced datasets or limited cultural representation during annotation. Structured pipelines reduce this risk by incorporating diverse reviewer perspectives and inclusive labeling strategies.

Diverse annotation teams help identify:

- Cultural stereotypes

- Gender bias

- Regional sensitivities

- Political context variations

- Minority-targeted hate speech

- Language-specific harmful terminology

Annotera prioritizes diversity within annotation operations to improve dataset fairness and reduce unintended discriminatory outcomes in AI models.

Continuous Feedback Loops for Safer AI

AI safety is not a one-time project. Harmful content patterns constantly evolve, especially on digital platforms and social media environments. Structured annotation pipelines support continuous improvement through iterative feedback mechanisms.

Feedback loops allow organizations to:

- Retrain models using newly identified harmful patterns

- Update moderation taxonomies regularly

- Improve edge-case handling

- Adapt to emerging compliance regulations

- Monitor production model failures

- Refine annotation guidelines over time

Continuous retraining helps AI systems remain aligned with evolving safety standards and user expectations.

A strategic data annotation outsourcing partnership enables businesses to scale these continuous learning workflows efficiently.

Industry Applications of Structured Annotation Pipelines

Structured annotation pipelines are essential across multiple industries where AI safety and content accuracy directly impact user trust.

Social Media Platforms

AI moderation systems require accurate labeling of hate speech, harassment, misinformation, and harmful user-generated content.

Healthcare AI

Medical AI systems must avoid unsafe recommendations, inaccurate diagnoses, or misleading healthcare guidance.

Financial Services

AI-powered financial platforms need safeguards against fraudulent recommendations and biased decision-making.

Customer Support Automation

Conversational AI tools must detect abusive language, emotional distress, and sensitive escalation scenarios.

Generative AI Platforms

Large language models require reinforcement training based on high-quality human feedback and safety-focused annotations.

Organizations across these sectors increasingly rely on experienced data annotation company partners to support responsible AI deployment.

Why Businesses Choose Annotation Outsourcing

Managing large-scale annotation operations internally can be resource-intensive and difficult to scale. Many organizations turn to data annotation outsourcing providers for specialized expertise, operational flexibility, and faster turnaround times.

Benefits of outsourcing include:

- Access to trained annotation professionals

- Scalable workforce management

- Reduced operational overhead

- Faster dataset processing

- Multi-language annotation support

- Dedicated QA infrastructure

- Continuous workflow optimization

Partnering with a trusted text annotation outsourcing provider allows businesses to focus on AI innovation while maintaining strong safety standards.

How Annotera Supports Responsible AI Development

At Annotera, we help organizations design structured annotation pipelines that improve AI reliability, reduce harmful outputs, and strengthen model governance.

Our services include:

- Text annotation for AI safety training

- Toxicity and harmful content labeling

- Human-in-the-loop moderation workflows

- Multi-language annotation support

- Quality assurance management

- Dataset validation and auditing

- Bias reduction strategies

- Scalable annotation operations

As a specialized data annotation company, Annotera combines human expertise, scalable workflows, and quality-driven processes to help organizations build safer and more trustworthy AI systems.

Conclusion

Reducing harmful AI outputs requires more than advanced algorithms. The true foundation of responsible AI lies in high-quality data, structured annotation workflows, and continuous human oversight.

Structured annotation pipelines help organizations improve moderation accuracy, minimize bias, strengthen compliance, and create AI systems that better understand context and human behavior. By combining detailed annotation standards, robust QA processes, and ongoing feedback loops, businesses can significantly reduce the risks associated with harmful AI outputs.

As AI adoption continues to grow, organizations must prioritize responsible training methodologies that support long-term trust and safety. Working with an experienced text annotation company like Annotera provides the expertise and scalability needed to build reliable AI systems that align with evolving ethical and regulatory expectations.